ga

ga  English

English  Español

Español  中國人

中國人  Tiếng Việt

Tiếng Việt  Deutsch

Deutsch  Українська

Українська  Português

Português  Français

Français  भारतीय

भारतीय  Türkçe

Türkçe  한국인

한국인  Italiano

Italiano  اردو

اردو  Indonesia

Indonesia  Polski

Polski Tá scríobadh athbhreithnithe Amazon le Python úsáideach agus anailís iomaitheora á stiúradh, ag seiceáil athbhreithnithe, agus ag déanamh taighde margaidh. Léiríonn sé seo conas athbhreithnithe táirgí a scrape ar Amazon go héifeachtach le Python, Beautifulsoup, agus iarrann sé leabharlanna.

Sula dtéann tú isteach sa phróiseas scríobtha, cinntigh go bhfuil na leabharlanna Python riachtanacha suiteáilte agat:

pip install requests

pip install beautifulsoup4Díreoimid ar athbhreithnithe táirgí a bhaint as leathanach Amazon agus scrúdóimid gach céim den phróiseas scríobtha céim ar chéim.

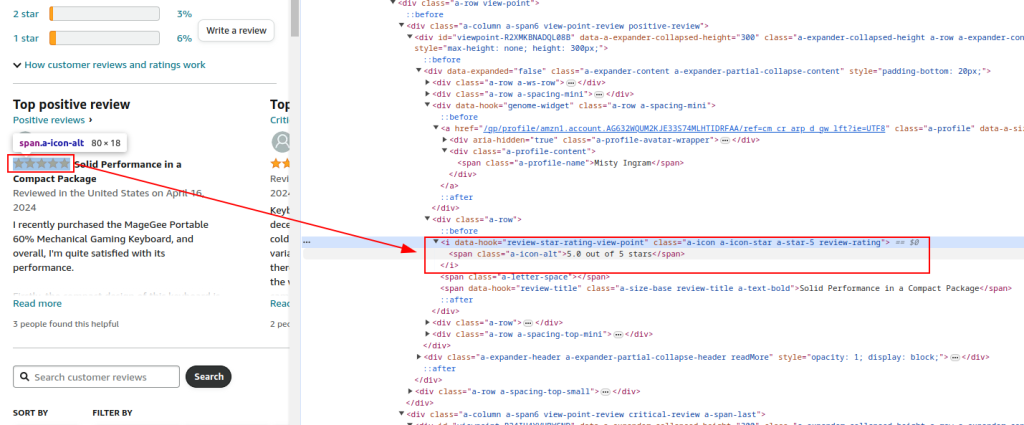

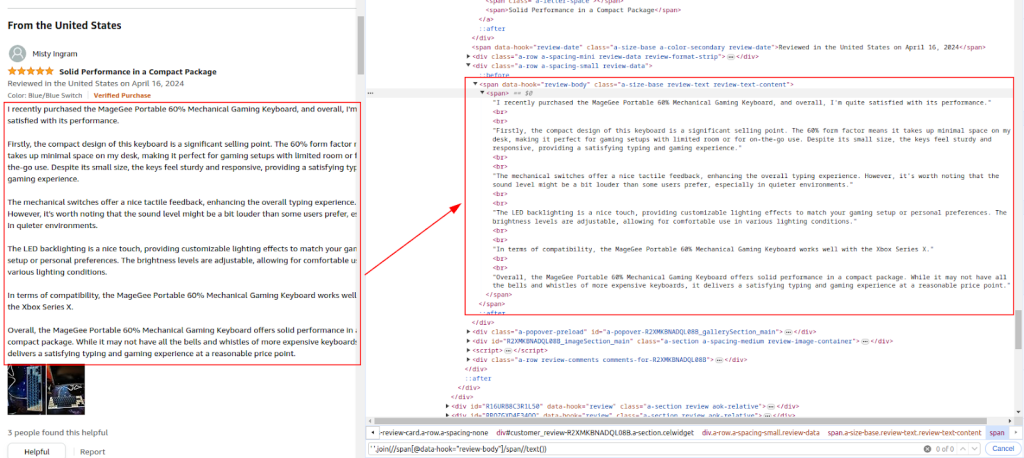

Iniúchadh a dhéanamh ar struchtúr HTML an leathanach Athbhreithnithe Táirgí Amazon chun na heilimintí a theastaíonn uainn a shainaithint: ainmneacha athbhreithneoirí, rátálacha agus tuairimí.

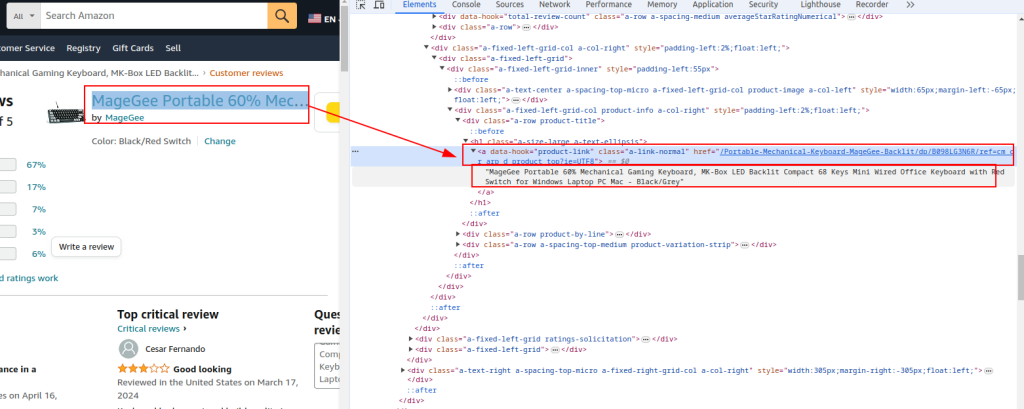

Teideal an Táirge agus URL :

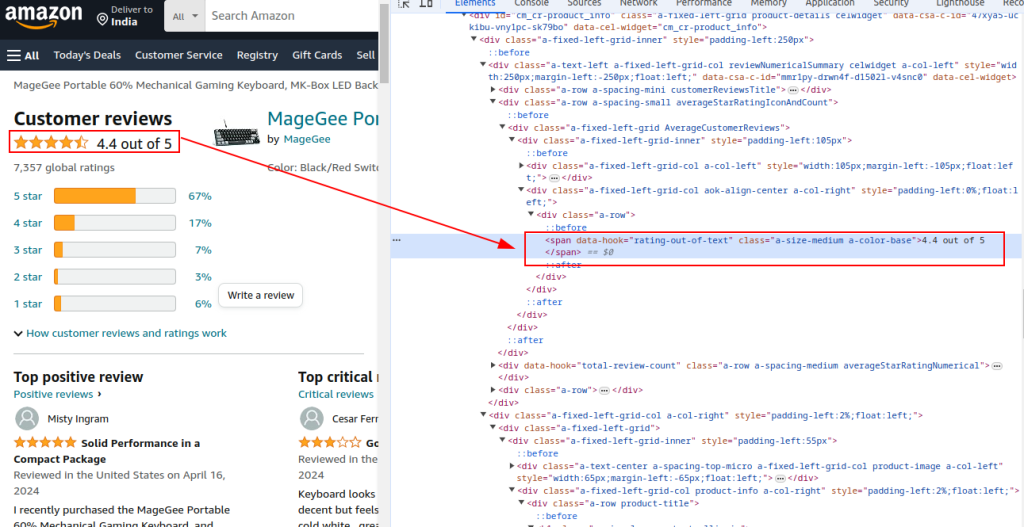

Rátáil iomlán:

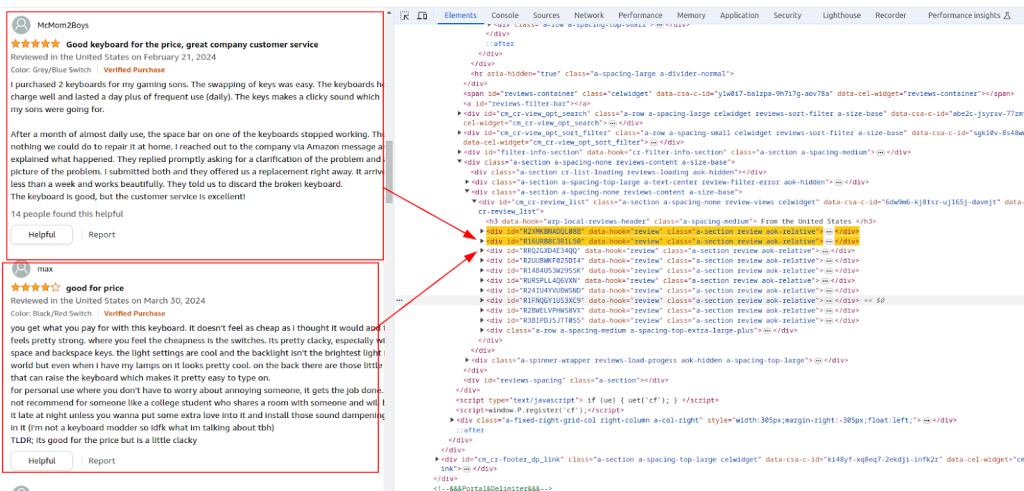

Rannóg athbhreithnithe:

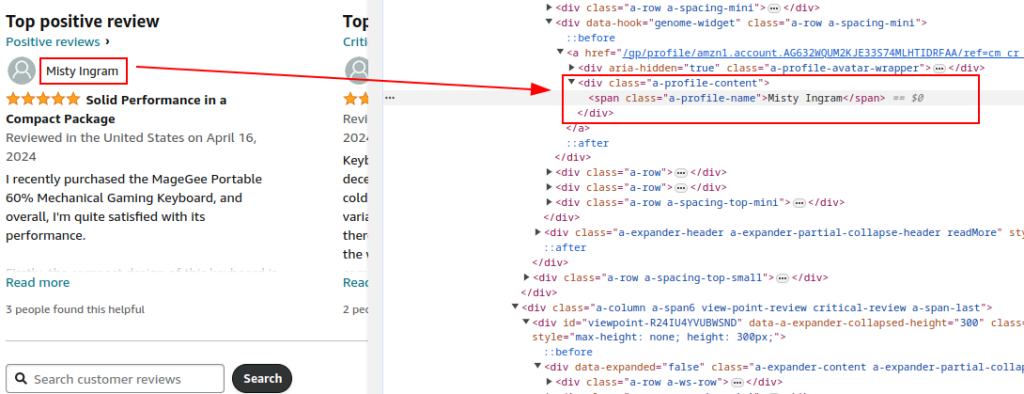

Ainm an Údair:

É féin:

Tuairim:

Bain úsáid as an leabharlann iarratais chun iarratais HTTP GET a sheoladh chuig leathanach Athbhreithnithe Táirgí Amazon. Cuir ceanntásca ar bun chun iompraíocht bhrabhsálaí dlisteanach a aithris agus a bhrath a sheachaint. Tá seachvótálaithe agus ceanntásca iarratais iomlána riachtanach chun nach gcuirfidh Amazon bac orthu.

Cuidíonn úsáid seachvótálaithe le seoltaí IP a rothlú chun toirmisc IP agus teorainneacha ráta ó Amazon a sheachaint. Tá sé ríthábhachtach do scríobadh mórscála chun anaithnideacht a choinneáil agus chun cosc a chur ar bhrath. Anseo, cuireann an tseirbhís seachfhreastalaí na sonraí seachfhreastalaí ar fáil.

Lena n-áirítear ceanntásca éagsúla cosúil le hionchódú glactha, glacadh le teanga, reisitheoir, nasc, agus uasghrádú a dhéanamh ar iarraidh ar iarraidh bhrabhsálaí dlisteanach, ag laghdú an seans go ndéanfaí é a bhratach mar bot.

import requests

url = "https://www.amazon.com/Portable-Mechanical-Keyboard-MageGee-Backlit/product-reviews/B098LG3N6R/ref=cm_cr_dp_d_show_all_btm?ie=UTF8&reviewerType=all_reviews"

# Sampla de sheachvótálaí a sholáthraíonn an tseirbhís seachfhreastalaí

proxy = {

'http': 'http://your_proxy_ip:your_proxy_port',

'https': 'https://your_proxy_ip:your_proxy_port'

}

headers = {

'accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.7',

'accept-language': 'en-US,en;q=0.9',

'cache-control': 'no-cache',

'dnt': '1',

'pragma': 'no-cache',

'sec-ch-ua': '"Not/A)Brand";v="99", "Google Chrome";v="91", "Chromium";v="91"',

'sec-ch-ua-mobile': '?0',

'sec-fetch-dest': 'document',

'sec-fetch-mode': 'navigate',

'sec-fetch-site': 'same-origin',

'sec-fetch-user': '?1',

'upgrade-insecure-requests': '1',

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36',

}

# Seol http faigh iarratas chuig an URL le ceanntásca agus seachvótálaí

try:

response = requests.get(url, headers=headers, proxies=proxy, timeout=10)

response.raise_for_status() # Raise an exception for bad response status

except requests.exceptions.RequestException as e:

print(f"Error: {e}")

Parse an t -ábhar HTML den fhreagra ag baint úsáide as BeautifulSoup chun sonraí coitianta táirge a bhaint amach mar URL, teideal, agus rátáil iomlán.

from bs4 import BeautifulSoup

soup = BeautifulSoup(response.content, 'html.parser')

# Sonraí comhchoiteanna táirge a bhaint

product_url = soup.find('a', {'data-hook': 'product-link'}).get('href', '')

product_title = soup.find('a', {'data-hook': 'product-link'}).get_text(strip=True)

total_rating = soup.find('span', {'data-hook': 'rating-out-of-text'}).get_text(strip=True)

Leanúint ar aghaidh ag parsáil an ábhair HTML chun ainmneacha, rátálacha, agus tuairimí athbhreithneoirí a bhaint amach bunaithe ar na habairtí XPath aitheanta.

reviews = []

review_elements = soup.find_all('div', {'data-hook': 'review'})

for review in review_elements:

author_name = review.find('span', class_='a-profile-name').get_text(strip=True)

rating_given = review.find('i', class_='review-rating').get_text(strip=True)

comment = review.find('span', class_='review-text').get_text(strip=True)

reviews.append({

'Product URL': product_url,

'Product Title': product_title,

'Total Rating': total_rating,

'Author': author_name,

'Rating': rating_given,

'Comment': comment,

})

Bain úsáid as modúl CSV tógtha Python chun na sonraí eastósctha a shábháil i gcomhad CSV le haghaidh tuilleadh anailíse.

import csv

# Sainmhínigh cosán comhaid CSV

csv_file = 'amazon_reviews.csv'

# Sainmhínigh ainmneacha allamuigh CSV

fieldnames = ['Product URL', 'Product Title', 'Total Rating', 'Author', 'Rating', 'Comment']

# Sonraí a scríobh chuig comhad CSV

with open(csv_file, mode='w', newline='', encoding='utf-8') as file:

writer = csv.DictWriter(file, fieldnames=fieldnames)

writer.writeheader()

for review in reviews:

writer.writerow(review)

print(f"Data saved to {csv_file}")

Seo an cód iomlán chun sonraí athbhreithnithe Amazon a scrape agus é a shábháil ar chomhad CSV:

import requests

from bs4 import BeautifulSoup

import csv

import urllib3

urllib3.disable_warnings()

# URL den leathanach Athbhreithnithe Táirgí Amazon

url = "https://www.amazon.com/Portable-Mechanical-Keyboard-MageGee-Backlit/product-reviews/B098LG3N6R/ref=cm_cr_dp_d_show_all_btm?ie=UTF8&reviewerType=all_reviews"

# Seachfhreastalaí a sholáthraíonn an seachfhreastalaí le húdarú IP

path_proxy = 'your_proxy_ip:your_proxy_port'

proxy = {

'http': f'http://{path_proxy}',

'https': f'https://{path_proxy}'

}

# Ceanntásca don iarraidh HTTP

headers = {

'accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.7',

'accept-language': 'en-US,en;q=0.9',

'cache-control': 'no-cache',

'dnt': '1',

'pragma': 'no-cache',

'sec-ch-ua': '"Not/A)Brand";v="99", "Google Chrome";v="91", "Chromium";v="91"',

'sec-ch-ua-mobile': '?0',

'sec-fetch-dest': 'document',

'sec-fetch-mode': 'navigate',

'sec-fetch-site': 'same-origin',

'sec-fetch-user': '?1',

'upgrade-insecure-requests': '1',

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36',

}

# Seol http faigh iarratas chuig an URL le ceanntásca agus láimhseáil eisceachtaí

try:

response = requests.get(url, headers=headers, timeout=10, proxies=proxy, verify=False)

response.raise_for_status() # Raise an exception for bad response status

except requests.exceptions.RequestException as e:

print(f"Error: {e}")

# Parse an t -ábhar HTML ag baint úsáide as BeautifulSoup

soup = BeautifulSoup(response.content, 'html.parser')

# Sonraí comhchoiteanna táirge a bhaint

product_url = soup.find('a', {'data-hook': 'product-link'}).get('href', '') # Extract product URL

product_title = soup.find('a', {'data-hook': 'product-link'}).get_text(strip=True) # Extract product title

total_rating = soup.find('span', {'data-hook': 'rating-out-of-text'}).get_text(strip=True) # Extract total rating

# Athbhreithnithe aonair a bhaint

reviews = []

review_elements = soup.find_all('div', {'data-hook': 'review'})

for review in review_elements:

author_name = review.find('span', class_='a-profile-name').get_text(strip=True) # Extract author name

rating_given = review.find('i', class_='review-rating').get_text(strip=True) # Extract rating given

comment = review.find('span', class_='review-text').get_text(strip=True) # Extract review comment

# Déan gach athbhreithniú a stóráil i bhfoclóir

reviews.append({

'Product URL': product_url,

'Product Title': product_title,

'Total Rating': total_rating,

'Author': author_name,

'Rating': rating_given,

'Comment': comment,

})

# Sainmhínigh cosán comhaid CSV

csv_file = 'amazon_reviews.csv'

# Sainmhínigh ainmneacha allamuigh CSV

fieldnames = ['Product URL', 'Product Title', 'Total Rating', 'Author', 'Rating', 'Comment']

# Sonraí a scríobh chuig comhad CSV

with open(csv_file, mode='w', newline='', encoding='utf-8') as file:

writer = csv.DictWriter(file, fieldnames=fieldnames)

writer.writeheader()

for review in reviews:

writer.writerow(review)

# Priontáil Teachtaireacht Daingnithe

print(f"Data saved to {csv_file}")

Mar fhocal scoir, tá sé ríthábhachtach béim a leagan ar go bhfuil roghnú freastalaithe seachfhreastalaí iontaofa mar phríomhchéim i scríbhneoireacht scripte le haghaidh scríobadh gréasáin. Cinntíonn sé seo go seachnófar go héifeachtach bacainní agus cosaint i gcoinne scagairí frith-bot. Is iad na roghanna is oiriúnaí le haghaidh scríobtha ná freastalaithe seachfhreastalaí cónaithe, a thairgeann ardfhachtóir iontaobhais agus seoltaí IP dinimiciúla, mar aon le seachfhreastalaithe ISP statach a sholáthraíonn cobhsaíocht ardluais agus oibriúcháin.

Tuairimí: 0